I have a lot of respect for Scientific American contributing physics editor George Musser's willingness to solicit and publish articles on some fairly speculative and, especially, foundational, topics whether in string theory, cosmology, the foundations of quantum theory, quantum gravity, or quantum information. I've enjoyed and learned from these articles even when I haven't agreed with them. (OK, I haven't enjoyed all of them of course... a few have gotten under my skin.) I've met George myself, at the most recent FQXi conference; he's a great guy and was very interested in hearing, both from me and from others, about cutting-edge research. I also have a lot of respect for his willingness to dive in to a fairly speculative area and write an article himself, as he has done with "A New Enlightenment" in the November 2012 Scientific American (previewed here). So although I'm about to critique some of the content of that article fairly strongly, I hope it won't be taken as mean-spirited. The issues raised are very interesting, and I think we can learn a lot by thinking about them; I certainly have.

The article covers a fairly wide range of topics, and for now I'm just going to cover the main points that I, so far, feel compelled to make about the article. I may address further points later; in any case, I'll probably do some more detailed posts, maybe including formal proofs, on some of these issues.

The basic organizing theme of the article is that quantum processes, or quantum ideas, can be applied to situations which social scientists usually model as involving the interactions of "rational agents"...or perhaps, as they sometimes observe, agents that are somewhat rational and somewhat irrational. The claim, or hope, seems to be that in some cases we can either get better results by substituting quantum processes (for instance, "quantum games", or "quantum voting rules") for classical ones, or perhaps better explain behavior that seems irrational. In the latter case, in this article, quantum theory seems to be being used more as a metaphor for human behavior than as a model of a physical process underlying it. It isn't clear to me whether we're supposed to view this as an explanation of irrationality, or in some cases as the introduction of a "better", quantum, notion of rationality. However, the main point of this post is to address specifics, so here are four main points; the last one is not quantum, just a point of classical political science.

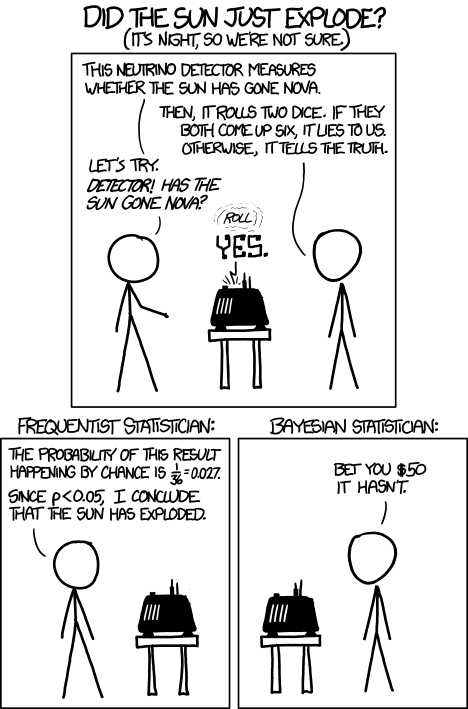

(1) Quantum games. There are many points to make on this topic. Probably most important is this one: quantum theory does not resolve the Prisoner's Dilemma. Under the definitions I've seen of "quantum version of a classical game", the quantum version is also a classical game, just a different one. Typically the strategy space is much bigger. Somewhere in the strategy space, typically as a basis for a complex vector space ("quantum state space") of strategies, or as a commuting ("classical") subset of the possible set of "quantum actions" (often unitary transformations, say, that the players can apply to physical systems that are part of the game-playing apparatus), one can set things up so one can compare the expected payoff of the solution, under various solution concepts such as Nash equilibrium, for the classical game and its "quantum version", and it may be that the quantum version has a better result for all players, using the same solution concept. This was so for Eisert, Lewenstein, and Wilkens' (ELW for short) quantum version of Prisoner's Dilemma. But this does not mean (nor, in their article, did ELW claim it did) that quantum theory "solves the Prisoner's Dilemma", although I suspect when they set out on their research, they might have had hope that it could. It doesn't because the prisoners can't transform their situation into quantum prisoners dilemma; to play that game, whether by quantum or classical means, would require the jailer to do something differently. ELW's quantum prisoner's dilemma involves starting with an entangled state of two qubits. The state space consists of the unit Euclidean norm sphere in a 4-dimensional complex vector space (equipped with Euclidean inner product); it has a distinguished orthonormal basis which is a product of two local "classical bases", each of which is labeled by the two actions available to the relevant player in the classical game. However the quantum game consists of each player choosing a unitary operator to perform on their local state. Payoff is determined---and here is where the jailer must be complicit---by performing a certain two-qubit unitary---one which does not factor as a product of local unitaries---and then measuring in the "classical product basis", with payoffs given by the classical payoff corresponding to the label of the basis vector corresponding to the result. Now, Musser does say that "Quantum physics does not erase the original paradoxes or provide a practical system for decision making unless public officials are willing to let people carry entangled particles into the voting booth or the police interrogation room." But the situation is worse than that. Even if prisoners could smuggle in the entangled particles (and in some realizations of prisoners' dilemma in settings other than systems of detention, the players will have a fairly easy time supplying themselves with such entangled pairs, if quantum technology is feasible at all), they won't help unless the rest of the world, implementing the game, implements the desired game, i.e. unless the mechanism producing the payoffs doesn't just measure in a product basis, but implements the desired game by measuring in an entangled basis. Even more importantly, in many real-world games, the variables being measured are already highly decohered; to ensure that they are quantum coherent the whole situation has to be rejiggered. So even if you didn't need the jailer to make an entangled measurement---if the measurement was just his independently asking each one of you some question---if all you needed was to entangle your answers---you'd have to either entangle your entire selves, or covertly measure your particle and then repeat the answer to the jailer. But in the latter case, you're not playing the game where the payoff is necessarily based on the measurement result: you could decide to say something different from the measurement result. And that would have to be included in the strategy set.

There are still potential applications: if we are explicitly designing games as mechanisms for implementing some social decision procedure, then we could decide to implement a quantum version (according to some particular "quantization scheme") of a classical game. Of course, as I've pointed out, and as ELW do in their paper, that's just another classical game. But as ELW note, it is possible---in a setting where quantum operations (quantum computer "flops") aren't too much more expensive than their classical counterparts---that playing the game by quantum means might use less resources than playing it by simulating it classically. In a mechanism design problem that is supposed to scale to a large number of players, it even seems possible that the classical implementation could scale so badly with the number of players as to become infeasible, while the quantum one could remain efficient. For this reason, mechanism design for preference revelation as part of a public goods provision scheme, for instance, might be a good place to look for applications of quantum prisoners-dilemma like games. (I would not be surprised if this has been investigated already.)

Another possible place where quantum implementations might have an advantage is in situations where one does not fully trust the referee who is implementing the mechanism. It is possible that quantum theory might enable the referee to provide better assurances to the players that he/she has actually implemented the stated game. In the usual formulation of game theory, the players know the game, and this is not an issue. But it is not necessarily irrelevant in real-world mechanism design, even if it might not fit strictly into some definitions of game theory. I don't have a strong intuition one way or the other as to whether or not this actually works but I guess it's been looked into.

(2) "Quantum democracy". The part of the quote, in the previous item, about taking entangled particles into the voting booth, alludes to this topic. Gavriel Segre has a 2008 arxiv preprint entitled "Quantum democracy is possible" in which he seems to be suggesting that quantum theory can help us the difficulties that Arrow's Theorem supposedly shows exist with democracy. I will go into this in much more detail in another post. But briefly, if we consider a finite set A of "alternatives", like candidates to fill a single position, or mutually exclusive policies to be implemented, and a finite set I of "individuals" who will "vote" on them by listing them in the order they prefer them, a "social choice rule" or "voting rule" is a function that, for every "preference profile", i.e. every possible indexed set of preference orderings (indexed by the set of individuals), returns a preference ordering, called the "social preference ordering", over the alternatives. The idea is that then whatever subset of alternatives is feasible, society should choose the one mostly highly ranked by the social preference ordering, from among those alternatives that are feasible. Arrow showed that if we impose the seemingly reasonable requirements that if everyone prefers x to y, society should prefer x to y ("unanimity") and that whether or not society prefers x to y should be affected only by the information of which individuals prefer x to y, and not by othe aspects of individuals' preference orderings ("independence of irrelevant alternatives", "IIA"), the only possible voting rules are the ones such that, for some individual i called the "dictator" for the rule, the rule is that that individual's preferences are the social preferences. If you define a democracy as a voting rule that satisfies the requirements of unanimity and IIA and that is not dictatorial, then "democracy is impossible". Of course this is an unacceptably thin concept of individual and of democracy. But anyway, there's the theorem; it definitely tells you something about the limitations of voting schemes, or, in a slighlty different interpretation, of the impossibility of forming a reasonable idea of what is a good social choice, if all that we can take into account in making the choice is a potentially arbitrary set of individuals' orderings over the possible alternatives.

Arrow's theorem tends to have two closely related interpretations: as a mechanism for combining actual individual preferences to obtain social preferences that depend in desirable ways on individual ones, or as a mechanism for combining formal preference orderings stated by individuals, into a social preference ordering. Again this is supposed to have desirable properties, and those properties are usually motivated by the supposition that the stated formal preference orderings are the individuals' actual preferences, although I suppose in a voting situation one might come up with other motivations. But even if those are the motivations, in the voting interpretation, the stated orderings are somewhat like strategies in a game, and need not coincide with agents' actual preference orderings if there are strategic advantages to be had by letting these two diverge.

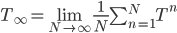

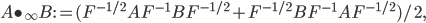

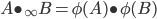

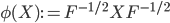

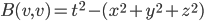

What could a quantum mitigation of the issues raised by Arrow's theorem---on either interpretation---mean? We must be modifying some concept in the theorem... that of an individual's preference ordering, or voting strategy, or that of alternative, or---although this seems less promising---that of individual---and arguing that somehow that gets us around the problems posed by the theorem. None of this seems very promising, for reasons I'll get around to in my next post. The main point is that if the idea is similar to the --- as we've seen, dubious --- idea that superposing strategies can help in quantum games, it doesn't seem to help with interpretations where the individual preference ordering is their actual preference ordering. How are we to superpose those? Superposing alternatives seems like it could have applications in a many-worlds type interpretation of quantum theory, where all alternatives are superpositions to begin with, but as far as I can see, Segre's formalism is not about that. It actually seems to be more about superpositions of individuals, but one of the big motivational problems with Segre's paper is that what he "quantizes" is not the desired Arrow properties of unanimity, independence of irrelevant alternatives, and nondictatoriality, but something else that can be used as an interesting intermediate step in proving Arrow's theorem. However, there are bigger problems than motivation: Segre's main theorem, his IV.4, is very weak, and actually does not differentiate between quantum and classical situations. As I discuss in more detail below, it looks like for the quantum logics of most interest for standard quantum theory, namely the projection lattices of of von Neumann algebras, the dividing line between ones having what Segre would call a "democracy", a certain generalization of a voting rule satisfying Arrow's criteria, and ones that don't (i.e. that have an "Arrow-like theorem") is not commutativity versus noncommutativity of the algebra (ie., classicality versus quantumness), but just infinite-dimensionality versus finite-dimensionality, which was already understood for the classical case. So quantum adds nothing. In a later post, I will go through (or post a .pdf document) all the formalities, but here are the basics.

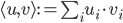

Arrow's Theorem can be proved by defining a set S of individuals to be decisive if for every pair x,y of alternatives, whenever everyone in S prefers x to y, and everyone not in x prefers y to x, society prefers x to y. Then one shows that the set of decisive sets is an ultrafilter on the set of individuals. What's an ultrafilter? Well, lets define it for an arbitrary lattice. The set, often called P(I), of subsets of any set I, is a lattice (the relevant ordering is subset inclusion, the defined meet and join are intersection and union). A filter---not yet ultra---in a lattice is a subset of the lattice that is upward-closed, and meet-closed. That is, to say that F is a filter is to say that if x is in F, and y is greater than or equal to x, then y is in F, and that if x and y are both in f, so is x meet y. For P(I), this means that a filter has to include every superset of each set in the filter, and also the intersection of every pair of sets in the filter. Then we say a filter is proper if it's not the whole lattice, and it's an ultrafilter if it's a maximal proper filter, i.e. it's not properly contained in any other filter (other than the whole lattice). A filter is called principal if it's generated by a single element of the lattice: i.e. if it's the smallest filter containing that element. Equivalently, it's the set consisting of that element and everything above it. So in the case of P(I), a principal filter consists of a given set, and all sets containing that set.

To prove Arrow's theorem using ultrafilters, one shows that unanimity and IIA imply that the set of decisive sets is an ultrafilter on P(I). But it was already well known, and is easy to show, that all ultrafilters on the powerset of a finite set are principal, and are generated by singletons of I, that is, sets containing single elements of I. So a social choice rule satisfying unanimity and IIA has a decisive set containing a single element i, and furthermore, all sets containing i are decisive. In other words, if i favors x over y, it doesn't matter who else favors x over y and who opposes it: x is socially preferred to y. In other words, the rule is dictatorial. QED.

Note that it is crucial here that the set I is finite. If you assume the axiom of choice (no pun intended ahead of time), then non-principal ultrafilters do exist in the lattice of subspaces of an infinite set, and the more abstract-minded people who have thought about Arrow's theorem and ultrafilters have indeed noticed that if you are willing to generalize Arrow's conditions to an infinite electorate, whatever that means, the theorem doesn't generalize to that situation. The standard existence proof for a non-principal ultrafilter is to use the axiom of choice in the form of Zorn's lemma to establish that any proper filter is contained in a maximal one (i.e. an ultrafilter) and then take the set of subsets whose complement (in I) is finite, show it's a filter, and show it's extension to an ultrafilter is not principal. Just for fun, we'll do this in a later post. I wouldn't summarize the situation by saying "infinite democracies exist", though. As a sidelight, some people don't like the fact that the existence proof is nonconstructive.

As I said, I'll give the details in a later post. Here, we want to examine Segre's proposed generalization. He defines a quantum democracy to be a nonprincipal ultrafilter on the lattice of projections of an "operator-algebraically finite von Neumann algebra". In the preprint there's no discussion of motivation, nor are there explicit generalizations of unanimity and IIA to corresponding quantum notions. To figure out such a correspondence for Segre's setup we'd need to convince ourselves that social choice rules, or ones satisfying one or the other of Arrow's properties, are related one to one to their sets of decisive coalitions, and then relate properties of the rule (or the remaining property), to the decisive coalitions' forming an ultrafilter. Nonprincipality is clearly supposed to correspond to nondictatorship. But I won't try to tease out, and then critique, a full correspondence right now, if one even exists.

Instead, let's look at Segre's main point. He defines a quantum logic as a non-Boolean orthomodular lattice. He defines a quantum democracy as a non-principal ultrafilter in a quantum logic. His main theorem, IV.4, as stated, is that the set of quantum democracies is non-empty. Thus stated, of course, it can be proved by showing the existence of even one quantum logic that has a non-principal ultrafilter. These do exist, so the theorem is true.

However, there is nothing distinctively quantum about this fact. Here, it's relevant that Segre's Theorem IV.3 as stated is wrong. He states (I paraphrase to clarify scope of some quantifiers) that L is an operator-algebraically finite orthomodular lattice all of whose ultrafilters are principal if, and only if, L is a classical logic (i.e. a Boolean lattice). But this is false. It's true that to get his theorem IV.4, he doesn't need this equivalence. But what is a von Neumann algebra? It's a *-algebra consisting of bounded operators on a Hilbert space, closed in the weak operator topology. (Or something isomorphic in the relevant sense to one of these.) There are commutative and noncommutative ones. And there are finite-dimensional ones and infinite-dimensional ones. The finite-dimensional ones include: (1) the algebra of all bounded operators on a finite-dimensional Hilbert space (under operator multiplication and complex conjugation), these are noncommutative for dimension > 1 (2) the algebra of complex functions on a finite set I (under pointwise multiplication and complex conjugation) and (3) finite products (or if you prefer the term, direct sums) of algebras of these types. (Actually we could get away with just type (1) and finite products since the type (2) ones are just finite direct sums of one-dimensional instances of type (1).) The projection lattices of the cases (2) are isomorphic to P(I) for I the finite set. These are the projection lattices for which Arrow's theorem can be proved using the fact that they have no nonprincipal ultrafilters. The cases (1) are their obvious quantum analogues. And it is easy to show that in these cases, too, there are no nonprincipal ultrafilters. Because the lattice of projections of a von Neumann algebra is complete, one can use essentially the same proof as for the case of P(I) for finite I. So for the obvious quantum analogues of the setups where Arrow's theorem is proven, the analogue of Arrow's theorem does hold, and Segre's "quantum democracies" do not exist.

Moreover, Alex Wilce pointed out to me in email that essentially the same proof as for P(I) with I infinite, gives the existence of a nonprincipal ultrafilter for any infinite-dimensional von Neumann algebra: one takes the set of projections of cofinite rank (i.e. whose orthocomplementary projection has finite rank), shows it's a filter, extends it (using Zorn's lemma) to an ultrafilter, and shows that's not principal. So (if the dividing line between finite-dimensional and infinite-dimensional von Neumann algebras is precisely that their lowest-dimensional faithful representations are on finite-dimensional Hilbert spaces, which seems quite likely) the dividing line between projection lattices of von Neumann algebras on which Segre-style "democracies" (nonprincipal ultrafilters) exist, is precisely that between finite and infinite dimension, and not that between commutativity and noncommutativity. I.e. the existence or not of a generalized decision rule satisfying a generalization of the conjunction of Arrow's conditions has nothing to do with quantumness. (Not that I think it would mean much for social choice theory or voting if it did.)

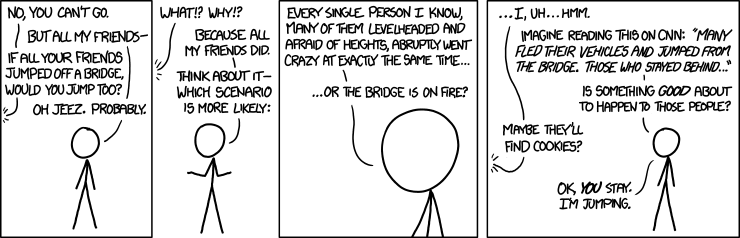

(3) I'll only say a little bit here about "quantum psychology". Some supposedly paradoxical empirical facts are described at the end of the article. When subjects playing Prisoner's Dilemma are told that the other player will snitch, they always (nearly always? there must be a few mistakes...) snitch. When they are told that the other player will stay mum, they usually also fink, but sometimes (around 20% of the time---it is not stated whether this typical of a single individual in repeated trials, or a percentage of individuals in single trials) stay mum. However, if they are not told what the other player will do, "about 40% of the time" they stay mum. Emanuel Pothos and Jerome Busemeyr devised a "quantum model" that reproduced the result. As described in Sci Am, Pothos interprets it in terms of destructive interference between (amplitudes associated with, presumably) the 100% probability of snitching when the other snitches and the 80% probability of snitching when they other does not that reduces the probability to 60% when they are not sure whether the other will snitch. It is a model; they do not claim that quantum physics of the brain is responsible. However, I think there is a better explanation, in terms of what Douglas Hofstadter called "superrationality", Nigel Howard called "metarationality", and I like to call a Kantian equilibrium concept, after the version of Kant's categorial imperative that urges you to act according to a maxim that you could will to be a universal law. Simply put, it's the line of reasoning that says "the other guy is rational like me, so he'll do what I do. What does one do if he believes that? Well, if we both snitch, we're sunk. If we both stay mum, we're in great shape. So we'll stay mum." Is that rational? I dunno. Kant might have argued it is. But in any case, people do consider this argument, as well, presumably, as the one for the Nash equilibrium. But in either of the cases where the person is told what the other will do, there is less role for the categorical imperative; one is being put more in the Nash frame of mind. Now it is quite interesting that people still cooperate a fair amount of the time when they know the other person is staying mum; I think they are thinking of the other person's action as the outcome of the categorical imperative reasoning, and they feel some moral pressure to stay with the categorical imperative reasoning. Whereas they are easily swayed to completely dump that reasoning in the case when told the other person snitched: the other has already betrayed the categorical imperative. Still, it is a bit paradoxical that people are more likely to cooperate when they are not sure whether the other person is doing so; I think the uncertainty makes the story that "he will do what I do" more vivid, and the tempting benefit of snitching when the other stays mum less vivid, because one doesn't know *for sure* that the other has stayed mum. Whether that all fits into the "quantum metaphor" I don't know but it seems we can get quite a bit of potential understanding here without invoking. Moreover there probably already exists data to help explore some of these ideas, namely about how the same individual behaves under the different certain and uncertain conditions, in anonymous trials guaranteed not to involve repetition with the same opponent.

Less relevant to quantum theory, but perhaps relevant in assessing how important voting paradoxes are in the real world, is an entirely non-quantum point:

(4) A claim by Piergiorgio Odifreddi, that the 1976 US election is an example of Condorcet's paradox of cyclic pairwise majority voting, is prima facie highly implausible to anyone who lived through that election in the US. The claim is that a majority would have favored, in two-candidate elections:

Carter over Ford (as in the actual election)

Ford over Reagan

Reagan over Carter

I strongly doubt that Reagan would have beat Carter in that election. There is some question of what this counterfactual means, of course: using polls conducted near the time of the election does not settle the issue of what would have happened in a full general-election campaign pitting Carter against Reagan. In "Preference Cycles in American Elections", Electoral Studies 13: 50-57 (1994), as summarized in Democracy Defended by Gerry Mackie, political scientist Benjamin Radcliff analyzed electoral data and previous studies concerning the US Presidential elections from 1972 through 1984, and found no Condorcet cycles. In 1976, the pairwise orderings he found for (hypothetical, in two of the cases) two-candidate elections were Carter > Ford, Ford > Reagan, and Carter > Reagan. Transitivity is satisfied; no cycle. Obviously, as I've already discussed, there are issues of methodology, and how to analyze a counterfactual concerning a general election. More on this, perhaps, after I've tracked down Odifreddi's article. Odifreddi is in the Sci Am article because an article by him inspired Gavriel Segre to try to show that such problems with social choice mechanisms like voting might be absent in a quantum setting.

Odifreddi is cited by Musser as pointing out that democracies usually avoid Condorcet paradoxes because voters tend to line up on an ideological spectrum---I'm just sceptical until I see more evidence, that that was not the case also in 1976 in the US. I have some doubt also about the claim that Condorcet cycles are the cause of democracy "becoming completely dysfunctional" in "politically unsettled times", or indeed that it does become completely dysfunctional in such times. But I must remember that Odifreddi is from the land of Berlusconi. But then again, I doubt cycles are the main issue with him...