I'm not a statistician, and as a quantum theorist of a relatively abstract sort, I've done little actual data analysis. But because of my abstract interests, the nature of probability and its use in making inferences from data are of great interest. I have some relatively ill-informed thoughts on why the "classical statistics" community seems to have been quite resistant to "Bayesian statistics", at least for a while, that may be of interest, or at least worth logging for my own reference. Take this post in the original (?) spirit of the term "web log", rather than as a polished piece of the sort many blogs, functioning more in the spirit of online magazines, seem to aim at nowadays.

The main idea is this. Suppose doing Bayesian statistics is thought of as actually adopting a prior which specifies, say, one's initial estimate of the probabilities of several hypotheses, and then, on the basis of the data, computing the posterior probability of the hypotheses. In other words, what is usually called "Bayesian inference". That may be a poor way of presenting the results of an experiment, although it is a good way for individuals to reason about how the results of the experiment should affect their beliefs and decisions. The problem is that different users of the experimental results, e.g. different readers of a published study, may have different priors. What one would like is rather to present these users with a statistic, that is, some function of the data, much more succinct than simply publishing the data themselves, but just as useful, or almost as useful, in making the transition from prior probabilities to posterior probabilities, that is, of updating one's beliefs about the hypotheses of interest, to take into account the new data. Of course, for a compressed version of the data (a statistic) to be useful, it is probably necessary that the users share certain basic assumptions about the nature of the experiment. These assumptions might involve the probabilities of various experimental outcomes, or sets of data, if various hypotheses are true (or if a parameter takes various values), i.e., the likelihood function; they might also involve a restriction on the class of priors for which the statistic is likely to be useful. These should be spelled out, and, if it is not obvious, how the statistic can be used in computing posterior probabilities should be spelled out as well.

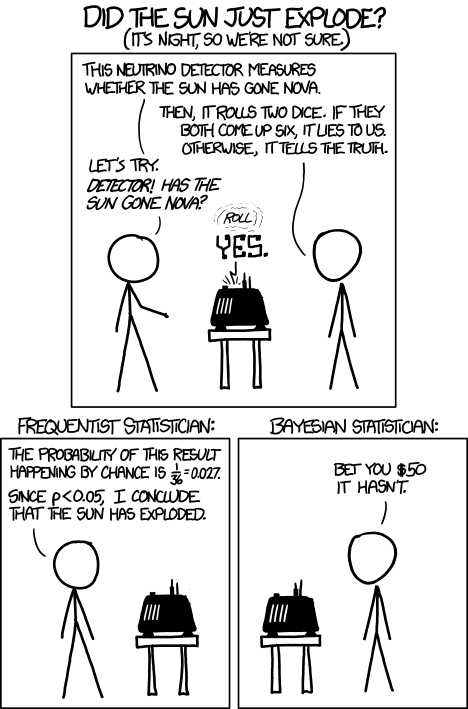

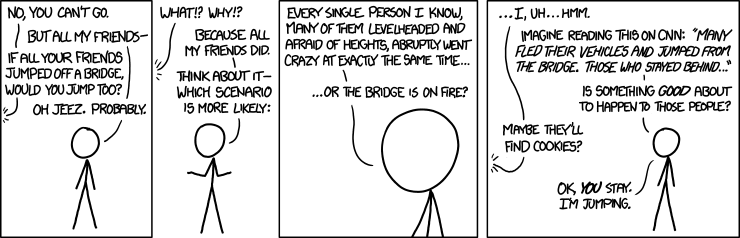

It seems to me likely that many classical or "frequentist" statistics may be used in such a way; but, quite possibly, classical language, like saying that statistical inference leads to "acceptance" or "rejection" of hypotheses, tends to obscure this more desirable use of the statistic as a potential input to the computation of posterior probabilities. In fact, I think people tend to have a natural tendency to want some notion of what the posterior probability of a hypothesis is; this is one source of the erroneous tendency, still sometimes found among the public, to confuse confidence levels with probabilities. Sometimes an advocacy of classical statistical tests may go with an ideological resistance to the computation of posterior probabilities, but I suppose not always. It also seems likely that in many cases, publishing actual Bayesian computations may be a good alternative to classical procedures, particularly if one is able to summarize in a formula what the data imply about posterior probabilities, for a broad enough range of priors that many or most users would find their prior beliefs adequately approximated by them. But in any case, I think it is essential, in order to properly understand the meaning of reports of classical statistical tests, to understand how they can be used as inputs to Bayesian inference. There may be other issues as well, e.g. that in some cases classical tests may make suboptimal use of the information available in the data. In other words, they may not provide a sufficient statistic: a function of the data that contains all the information available in the data, about some random variable of interest (say, whether a particular hypothesis is true or not). Of course whether or not a statistic is sufficient will depend on how one models the situation.

Most of this is old hat, but it is worth keeping in mind, especially as a Bayesian trying to understand what is going on when "frequentist" statisticians get defensive about general Bayesian critiques of their methods.